Microsoft

stopped to offer an updates on its Connector for SAP BW for MS SQL Server some

time ago. See their announcement about it here.

Instead, they suggest to use 3rd party software from Microsoft ISV

partners such as a Theobald

Xtract IS to act as plug-in for the SQL Server Integration Services (SSIS)

of the MS SQL Server.

It

happened that I worked with this software some time ago. At my client, we used

it to extract data from SAP BW to MS SQL server. We used Theobald Xtract IS in

particular Xtract OHS (Open Hub Service) component. In our case, there was a

Process Chain at SAP BW side that was triggered from Xtract IS. The Chain

prepared data into Open Hub and the Xtract IS pulled data from the OH to MS

SQL.

Here

some challenges I faced while deploying integration scenarios:

1. No authorization: In case that SAP BW user that is used

for an extraction is not authorized to read the data from the BW source object (OH)

there can be following logs spotted at MS SQL side:

Error:

0xC0047062 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

Xtract OHS [8]: Theobald.Extractors.Table.TheoReadTableException: User not

allowed to access table /BIC/OH<OH_tech_name>.

at

Theobald.Extractors.Table.TheoReadTableExtractor.Run(Action`1 processPackage,

CancellationToken cancellationToken)

at

Theobald.Extractors.Common.ExtractorBase`1.Run(Action`1 processResult,

LoggerBase logger, CancellationToken cancellationToken)

Whereas from

above log it is clear that the authorization is an issue there can also below error

logs that is not pointing to the authorization being as the issue:

Information: 0x3E8 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

XtractIS: [2022-05-05T11:09:42.662+02:00] Xtract IS Version 6.7.5.1

Information: 0x3E8 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

XtractIS: [2022-05-05T11:09:42.676+02:00] Xtract Kernel Version 5.1.4.95

Information: 0x3E8 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

XtractIS: [2022-05-05T11:09:42.677+02:00] Theobald.Extractors Version 1.20.14.1

Information: 0x3E8 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

XtractIS: [2022-05-05T11:09:42.687+02:00] Component is licensed to xxx

Information: 0x3E8 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

XtractIS: [2022-05-05T11:09:43.273+02:00] Connected to '<hostname>',

system number <xx>

Information: 0x3E8 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

XtractIS: [2022-05-05T11:09:43.284+02:00] Current Windows identity: <user>

Information: 0x3E8 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

XtractIS-OhsExtractorTable: [2022-05-05T11:09:43.301+02:00] Starting process

chain '<PROCESS_CHAIN>'...

Error: 0xC0047062 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

Xtract OHS [8]: ERPConnect.ABAPProgramException:

RfcInvoke failed(RFC_ABAP_EXCEPTION): FAILED

at ERPConnect.NW.NwApi.CallFunction(IntPtr client,

IntPtr function)

at ERPConnect.NW.NwClientFunction.CallApi()

at ERPConnect.NW.NwClientFunction.Execute(String tid,

String queue)

at

ERPConnect.RFCFunction.ExecuteImplementation(String tid, String queue)

at ERPConnect.RFCFunction.Execute()

at

Theobald.Extractors.Ohs.OhsExtractorBase.StartProcessChain()

at Theobald.Extractors.Ohs.OhsExtractorTable.Run(Action`1

processResult, CancellationToken cancellationToken)

at

Theobald.Extractors.Common.ExtractorBase`1.Run(Action`1 processResult,

LoggerBase logger, CancellationToken cancellationToken)

at Theobald.Extractors.Common.ExtractorBase`1.Run(Action`1

processResult, LoggerBase logger)

at XtractIS.XtractSourceOHS.PrimeOutput(Int32

outputs, Int32[] outputIDs, PipelineBuffer[] buffers)

at

Microsoft.SqlServer.Dts.Pipeline.ManagedComponentHost.HostPrimeOutput(IDTSManagedComponentWrapper100

wrapper, Int32 outputs, Int32[] outputIDs, IDTSBuffer100[] buffers, IntPtr

ppBufferWirePacket)

Error: 0xC0047038 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

SSIS.Pipeline: SSIS Error Code DTS_E_PRIMEOUTPUTFAILED. The PrimeOutput

method on Xtract OHS returned error code 0x80131500. The component

returned a failure code when the pipeline engine called PrimeOutput(). The

meaning of the failure code is defined by the component, but the error is fatal

and the pipeline stopped executing. There may be error messages posted

before this with more information about the failure.

Solution is to review what

authorization the extraction user at SAP BW side has. Identify what is missing

and add those missing authorization objects into a role that user has assigned

to. In case SAP BW objects are being extracted, you need to trace the extraction

to see what authorization is needed. In case of extraction from SAP tables also

following authorization is needed: S_TABU_NAM ACTVT=03; TABLE=ENLFDIR

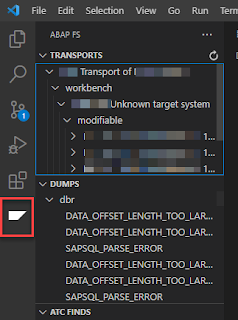

2. Memory exhausting

issue: In case that MS

SQL loads from multiple OHs in parallel, there can be a memory issues. At SAP BW

side there is a following ABAP dump:

Short

Text "connection closed (no

data)"

What

happened? Error in the ABAP application program. The current ABAP program "SAPLZ_THEO_READ_TABLE"

had to be terminated because it found a statement that could not be executed.

And

at MS SQL side there is a following error logs:

Error:

0xC0047062 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

Xtract OHS [46]: ERPConnect.ERPException: RfcGetFunctionName

failed(RFC_INVALID_HANDLE): RFC_FUNCTION_DESC_HANDLE handle 0544DEA0 was

already removed

at ERPConnect.NW.NwApi.CallFunction(IntPtr client, IntPtr function)

at ERPConnect.NW.NwClientFunction.CallApi()

at ERPConnect.NW.NwClientFunction.Execute(String tid, String queue)

at ERPConnect.RFCFunction.ExecuteImplementation(String tid, String queue)

at ERPConnect.RFCFunction.Execute()

at Theobald.Extractors.Table.TheoReadTableExtractor.Run(Action`1

processPackage, CancellationToken cancellationToken)

at Theobald.Extractors.Common.ExtractorBase`1.Run(Action`1 processResult,

LoggerBase logger, CancellationToken cancellationToken)

at Theobald.Extractors.Ohs.OhsExtractorTable.Run(Action`1 processResult,

CancellationToken cancellationToken)

at Theobald.Extractors.Common.ExtractorBase`1.Run(Action`1 processResult,

LoggerBase logger, CancellationToken cancellationToken)

at Theobald.Extractors.Common.ExtractorBase`1.Run(Action`1 processResult,

LoggerBase logger)

at XtractIS.XtractSourceOHS.PrimeOutput(Int32 outputs, Int32[] outputIDs,

PipelineBuffer[] buffers)

at

Microsoft.SqlServer.Dts.Pipeline.ManagedComponentHost.HostPrimeOutput(IDTSManagedComponentWrapper100

wrapper, Int32 outputs, Int32[] outputIDs, IDTSBuffer100[] buffers, IntPtr

ppBufferWirePacket)

Error:

0xC0047038 at TRIGGER PROCESS CHAIN <PROCESS_CHAIN>,

SSIS.Pipeline: SSIS Error Code DTS_E_PRIMEOUTPUTFAILED. The PrimeOutput

method on Xtract OHS returned error code 0x80131500. The component

returned a failure code when the pipeline engine called PrimeOutput (). The

meaning of the failure code is defined by the component, but the error is fatal

and the pipeline stopped executing. There may be error messages posted

before this with more information about the failure.

Or

Error:

0xC0047038 at TRIGGER PROCESS CHAIN SKU TO MSBI Q4, SSIS.Pipeline: SSIS Error

Code DTS_E_PRIMEOUTPUTFAILED. The PrimeOutput method on Xtract OHS

returned error code 0x80131500. The component returned a failure code

when the pipeline engine called PrimeOutput(). The

Error:

0xC0047062 at TRIGGER PROCESS CHAIN SKU TO MSBI Q1, Xtract OHS [46]:

Theobald.Extractors.Table.TheoReadTableException: Shared memory ran out of memory. Try setting

package size lower.

Error:

0xC0047038 at TRIGGER PROCESS CHAIN SKU TO MSBI Q1, SSIS.Pipeline: SSIS Error

Code DTS_E_PRIMEOUTPUTFAILED. The PrimeOutput method on Xtract OHS

returned error code 0x80131500. The component returned a failure code

when the pipeline engine called PrimeOutput(). The meaning of the failure code

is defined by the component, but the error is fatal and the pipeline stopped

executing. There may be error messages posted before this with more

information about the failure.

From the 2nd error log it is clear that it is related

to the memory. Solution is to review setup of the flow at MS SQL. In particular,

you need to play around a package size of OHS

extraction available in OHS Settings:

3. OPD extraction: There may be the case that

purely OHS based extraction will not be stable enough, as there is high data

volume to be extracted. Here OPD extractor

can be better choice. From my experience, the OPD extractor is more robust as I

enjoyed less issues related to memory. Also with respect to setup of the OPD

process there is an option to specify a package size available under Advanced

settings. Even a default value worked for me well. One drawback of the OPD extractor is

that there is no option to run the Process Chain prior data extraction as it is

possible via OHS

extraction component. Thus, I created flow in which OHS part is to trigger the

Chain and OPD part is to load the data to MS SQL itself.

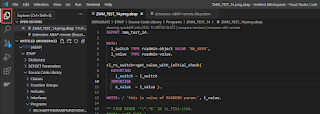

4. Version of function

module Z_THEO_READ_TABLE: This

FM is responsible of extracting the data. Depending on its version, there are

multiple options that can be leveraged. As always as it comes to any software

being on higher version brings more benefits. You can find what version of the

FM you have in its source code. In the top part in comments there is a version

mentioned. The version I worked was 2.11 released in Feb of 2022. In case you

face extraction issues perhaps quick win can be to upgrade the FM.